This article will explore the journey of bringing machine learning from research and development to production at Ubisoft, a leading global video game company. Specifically, we will dive into creating and evolving a platform to support data scientists and machine learning engineers in building and integrating ML pipelines within the Ubisoft ecosystem. This updated version of my presentation at PyData Montreal in June 2022 will highlight the challenges and successes of implementing ML in production, the future direction of the platform and its role in advancing the use of ML at Ubisoft.

Machine Learning at Ubisoft: A Look Inside

To start this section, I think a quick presentation of Ubisoft is needed :)

Ubisoft is a French company born in 1986 in France (in Brittany) and installed an office in Montreal in 1997.

Ubisoft has more than 45 office around the world, and it represents around 21000 talent worldwide, with around 4000 employees in Montreal, which make the MTL office the bigger video game studio in the world. In addition, Ubisoft is quite famous for multiple brands, from Assassin’s creed to Just dance.

Ubisoft is part of the entertainment industry, representing billions of dollars annually. Video game recently surpassed the film industry in terms of profit, and we can now see new actors coming into the market, like Netflix or ByteDance (the one behind TikTok), that wants to get some piece of the cake.

It is worth noting that over 200 individuals at Ubisoft are utilizing machine learning in their work (LinkedIn numbers). These professionals come from various backgrounds and use machine learning to address various problems, from marketing to game development. It demonstrates Ubisoft’s significant investment in data science and machine learning.

To give you an idea of the kind of projects tackled internally, I made a small selection of projects presented outside of Ubisoft:

- Procedural generation of content (video): developed by Player Analytic France, the idea is to rework the conception flow of levels by mixing bot interaction and human feedback on the levels generated.

- Deep RL for game development (video, article): developed by La Forge MTL, pathfinding in video games is a big topic for in-game AI, and this team developed a new solution in the navigation of bots on a map. It offers the ability to have intelligent non-playable characters to make the game more immersive.

- Zoobuilder (video, paper): developed by the Ubisoft China AI Lab (recently integrated La Forge), this project tackled a non-conventional topic around the motion capture of wild animals. On paper, it’s tough to put in place (bring a giraffe a motion capture), and there is an ethical question. Still, this team developed a solution to extract from videos the movement of the animal’s skeleton; to do this, they used game engine data to create the training data of the system.

But this is just the tip of the ML iceberg at Ubisoft, and there are way more projects under the hood.

As you can expect, bringing ML from R&D to production can be complex and challenging; an excellent example of this challenge was done by some colleagues last year at the GDC conference in their talk. Based on the kind of application, for example, the needs and constraints are not the same for an in-game AI or a recommender system for an in-game store. In 2018, bringing ML into the game, there was no real standard solution, mostly hacking existing systems to build ML. Still, some standard solutions are starting to emerge in the Ubisoft ecosystem, and our platform is part of the solution to ease the integration.

Building an ML Platform Fit for a Wizard: An Ubisoft Use Case

Introduction

Our platform was initiated in 2018, inspired by the work of the Montreal User Research Lab at Ubisoft MTL. This team developed a solution for serving recommendations in an in-game store using the analytic platform.

Still, the project was not standardized and imposed significant constraints on the analytic platform, which was not designed to handle it. As a result, our team was formed with the goal of designing a more scalable platform for data scientists and machine learning engineers to build ML pipelines that could be easily integrated into the Ubisoft ecosystem.

So let’s put the elephant out of the room right now about the naming of our platform. It’s called Merlin, and the logo has evolved throughout the years.

So we are not the only Merlin in ML town; there is one at NVIDIA that is more focused on deep learning-based recommender systems and one at Shopify that is more similar to our platform. Fun fact about the naming we had plenty of possibilities in the Summer of 2018, and the finalists were Merlin and Merlan (and we preferred a mage to a fish).

Let’s now see the platform in more detail.

Components

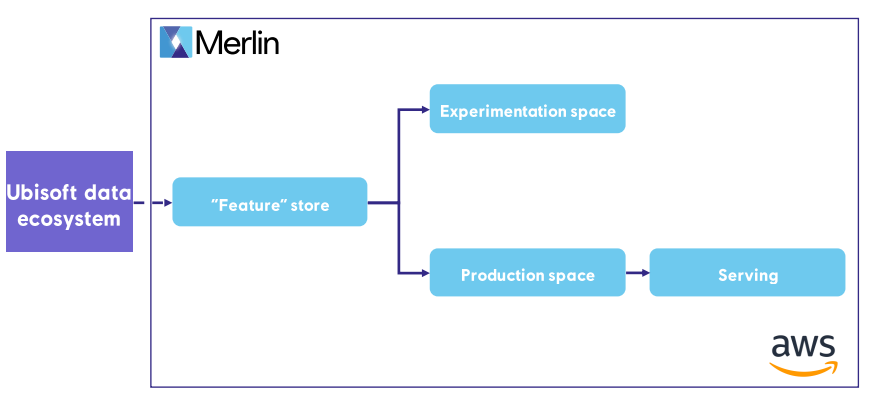

Our platform was inspired by Uber’s Michelangelo platform, publicized in 2017, and primarily focused on serving recommender systems. The platform has the following structure in terms of components:

Our platform is out of the Ubisoft data ecosystem; it’s built on top of AWS with the following components:

- A “feature store”: A repository for storing ‘features’ used to train and deploy machine learning models.

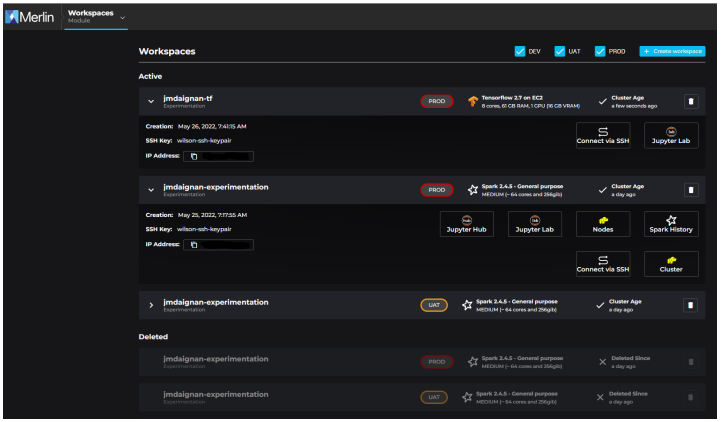

- An experimentation space: A workspace designed to experiment with different models using Jupyter.

- A production space: This is where machine learning models are trained, scored, and monitored.

- Serving: the area where predictions are produced or served based on the kind of use case (batch or live predictions)

The “feature store” is currently built on top of S3 and the glue catalogue; it’s not a traditional feature store but rather a collection of databases and tables that we are using to store the data needed and produced during ML pipeline execution or experiment. The connection with the Ubisoft data ecosystem is happening via a step that syncs data to our platform and can be executed at the end of every ETL processing data used in an ML project.

The various spaces (experimentation and production) are built on the same foundation for the infrastructure, EMR and EC2. Most of our clients use Spark for ETL and Mllib for training and scoring, but we recently added the ability to use a single EC2 (with or without GPU) to work more non-distributed way.

The experimentation space is here to explore new approaches for a project (like doing offline experiments) or dig into the served predictions; also, it’s accessible via SSH, so it’s easy to experiment with new packages in it.

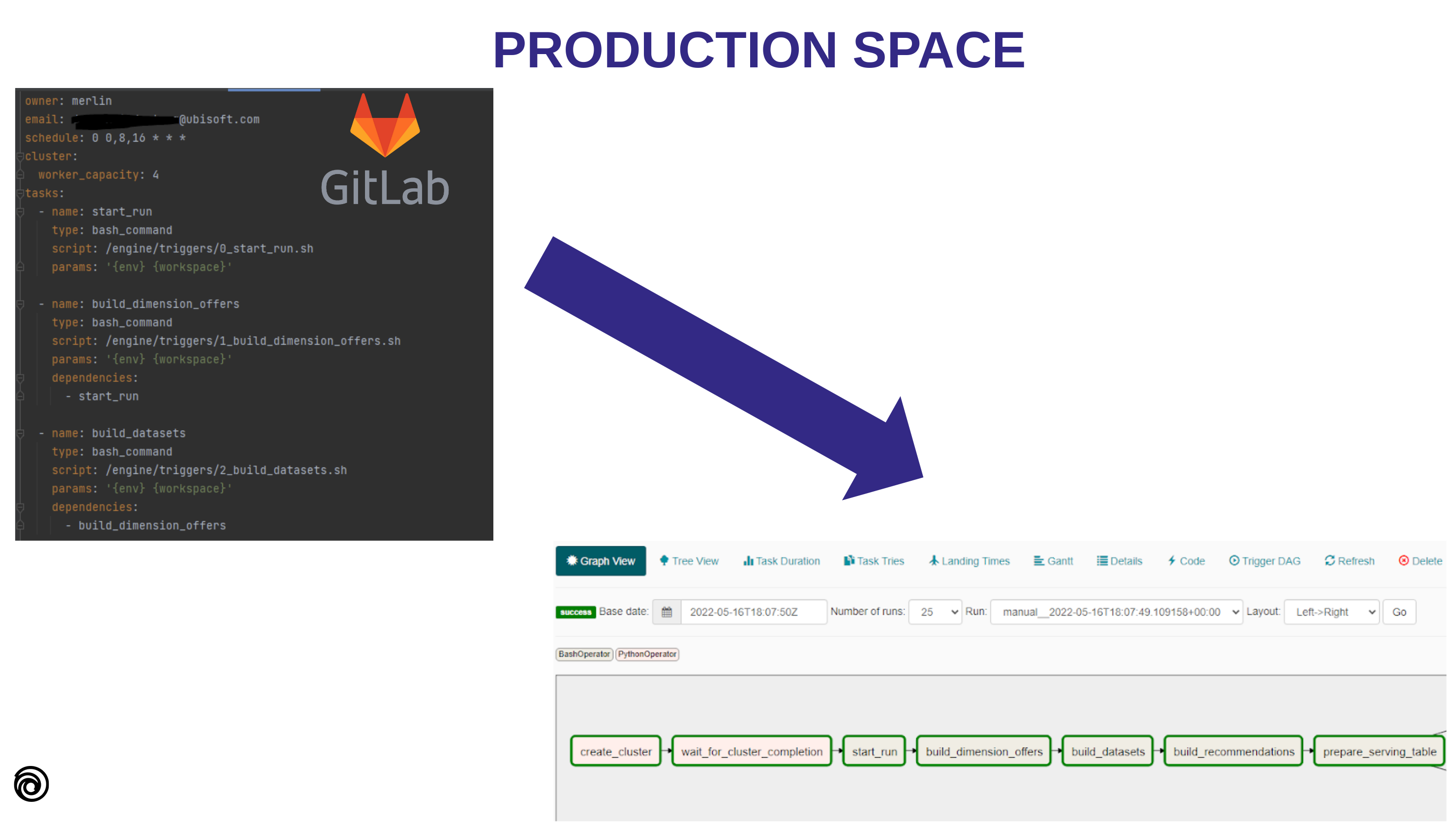

For the production space, in this case, no direct interaction with it but via an Airflow hosted on AWS. Our instance triggers the production space, and all the DAGs are set via a GitLab repository linked to the project. This connection is facilitated by a tool called Optimus, and the DAG design is done via a YAML file in the repository that will define DAGs.

It allows the users to define:

- the schedule of the DAG,

- the order of the steps

- the kind of machine they want to use

(With the GPU machines the file/header has been recently upgraded but the overall idea is still here)

This approach gives much flexibility to the user who wants to execute a DAG regularly (with no obligation to serve something). The deployment is done via a CI/CD triggered manually.

Finally, the serving part of our platform currently gives the ability to serve predictions differently:

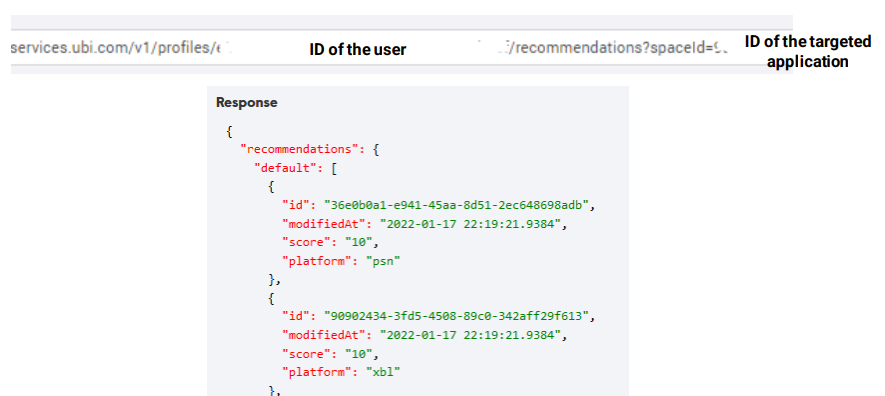

- Batch mode: Update regularly predictions with production space; these predictions are stored in dynamoDB and are called via an API

- Live mode: A recent addition to the platform, this feature is built on a Kubernetes cluster with Seldon Core and etc and can be accessed through the API.

The API is a critical part of the platform and needs to meet specific performance expectations. To make it easier to integrate with other systems, it’s callable via an internal service called Ubiservices which is standard at Ubisoft and used by all games and services. Here is an example of the API endpoint for retrieving batch predictions.

A vital aspect of the serving space is the concept of fallbacks. These default lists are used as a backup in case there are no predictions available to send. It is an important security measure in case of failure in the machine learning component, and it’s generally a good practice for any machine learning system. We also encourage programmers integrating with the API to have their fallback in case our API is not reachable (for example, in case of a cloud provider outage).

So now let’s see more in detail the workflow of a user.

Game On: Workflow and Use Cases in Ubisoft’s ML Platform

Workflow

As a platform user, there is first some access to get and have an actual use case that aims to go into production.

With these conditions filled, the main entry point to the platform is the portal; this is where users can access their workspace to experiment with models or check their features and predictions.

This portal is also an excellent place to interact with the pipeline and update the fallbacks for a specific application directly from a UI.

There are also guidebooks in the portal that can accompany a DS or an online programmer to use our platform (and set the correct permissions for applications and people to interact with the platform).

With the workspaces, also in parallel to that, we gave users the ability to track their experiments or models via a mlflow instance hosted in our platform; we started to use mlflow in 2019.

It offers a good trade-off regarding maintenance and adoption in the ML community. But we are starting to see new needs related to visualization and permissions that the current open source mlflow is missing, but a solution like clearML is covering.

Also, mlflow is used to track machine learning pipelines running in production. This is useful because it allows us to link DAG execution with mlflow experiments and runs, to version datasets and predictions, and to go back in time to check the accuracy of predictions or the quality of the data used for training or scoring. Again, this helps ensure our machine-learning processes’ reliability and transparency.

Our platform is linked with a python package that we call merlin-SDK that offers a set of modules to ease the life of ML practitioners in our platform.

It tackles some aspects of an ML pipeline like :

- Online services: to interact with the online service of Ubisoft

- Alerting: to quickly write and send an alert via different channels (slack, teams or email)

- Helpers functions: to interact with data storage in an EMR or EC2 context, manipulate date objects, or do recurrent data processing

- Tracking of ml pipeline/experiment: currently, these functions are built on top of mlflow, but we made the naming agnostic of the system used.

- Data quality: to check the data produced by ETL or before training when different data sources are joined

- AB testing: doing an AB test is essential in the production context, so we added some functions recently to ease the process of making an AB test and evaluating impacts.

This package is an inner source project, so our users can also participate in its construction.

It’s important to note that not all teams need to use all of the platform’s components. For example, some teams may only use the serving part because they already have a pipeline running at Ubisoft and still need to transition to the platform. It allows teams to adopt the platform on a more flexible, “a la carte” basis, making it easier to integrate into their workflow.

And with that, let’s see where the platform is used in the Ubisoft ecosystem.

Use cases

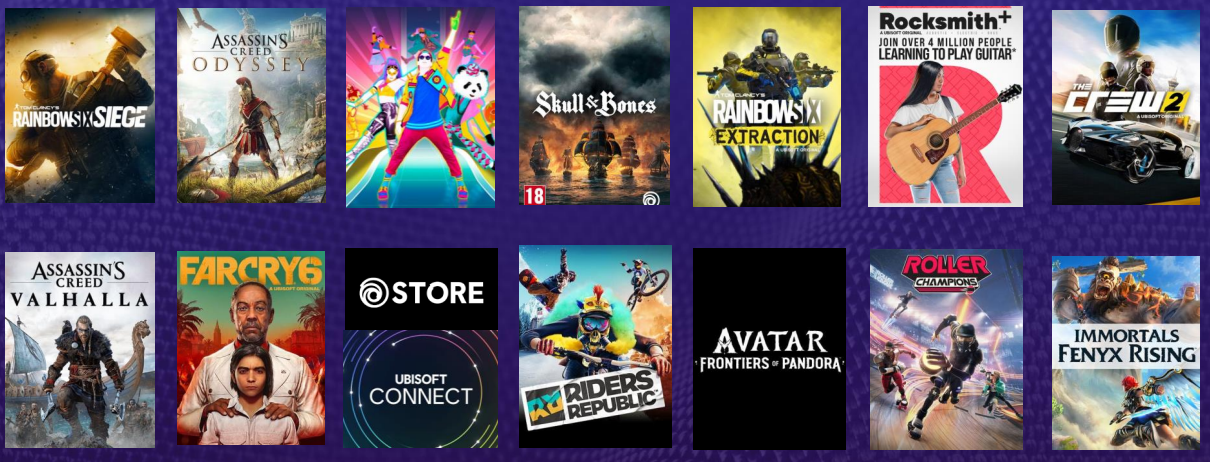

Currently, our platform is powering multiple projects (around 15) around Ubisoft, from in-game personalization (in-game content) to out-of-game personalization (friends and store) or ML-enhanced services (like cheater detection). Most of our use cases are personalization systems; there is an overview of the games where the platform serves (or will serve) predictions.

This platform is used by multiple teams at Ubisoft from San Francisco, Montreal, France and China (and the list is growing). Some of the games are supported by the DS of our team (like me). Our team recently delivered a live prediction feature for Just Dance 2023, which allows the game to predict the next song to play based on the actions of the current session. We worked with the Player Analytics France team to deploy their model and enhance the gameplay experience in the game. You can find more information about this project HERE.

Suppose you want to see more details about the applications deployed. In that case, I am inviting you to have a look at the talk with two former colleagues that leverage the serving part of Merlin to power a friend’s recommender system in Ubisoft connect.

It concludes the presentation of the ML platform we built over the years.

Wrap-up

After more than four years, our platform has reached a level of maturity and usage at Ubisoft that is impressive and brings value on a day-to-day basis at Ubisoft, but there is so much more to do.

An essential aspect of our upcoming work is mutualization. We are not the only team working on machine learning in production at the Ubisoft Data Office (although we are the only team working on a platform) and at Ubisoft more generally. Recently, we merged with teams in France and China specializing in player safety/support, like operating fraud detection systems, for example (more in the following video).

This new expertise will enable us to improve the platform in various ways, such as making it more cost-efficient and user-friendly and adding new services. In addition, we aim to continue supporting our internal users and making the platform even more valuable to them.

Hopes that you enjoyed the reading.